This book is sequel to a book Statistical Inference: Testing of Hypotheses (published by PHI Learning). Intended for the postgraduate students of statistics, it introduces the problem of estimation in the light of foundations laid down by Sir R.A. Fisher (1922) and follows both classical and Bayesian approaches to solve these problems.

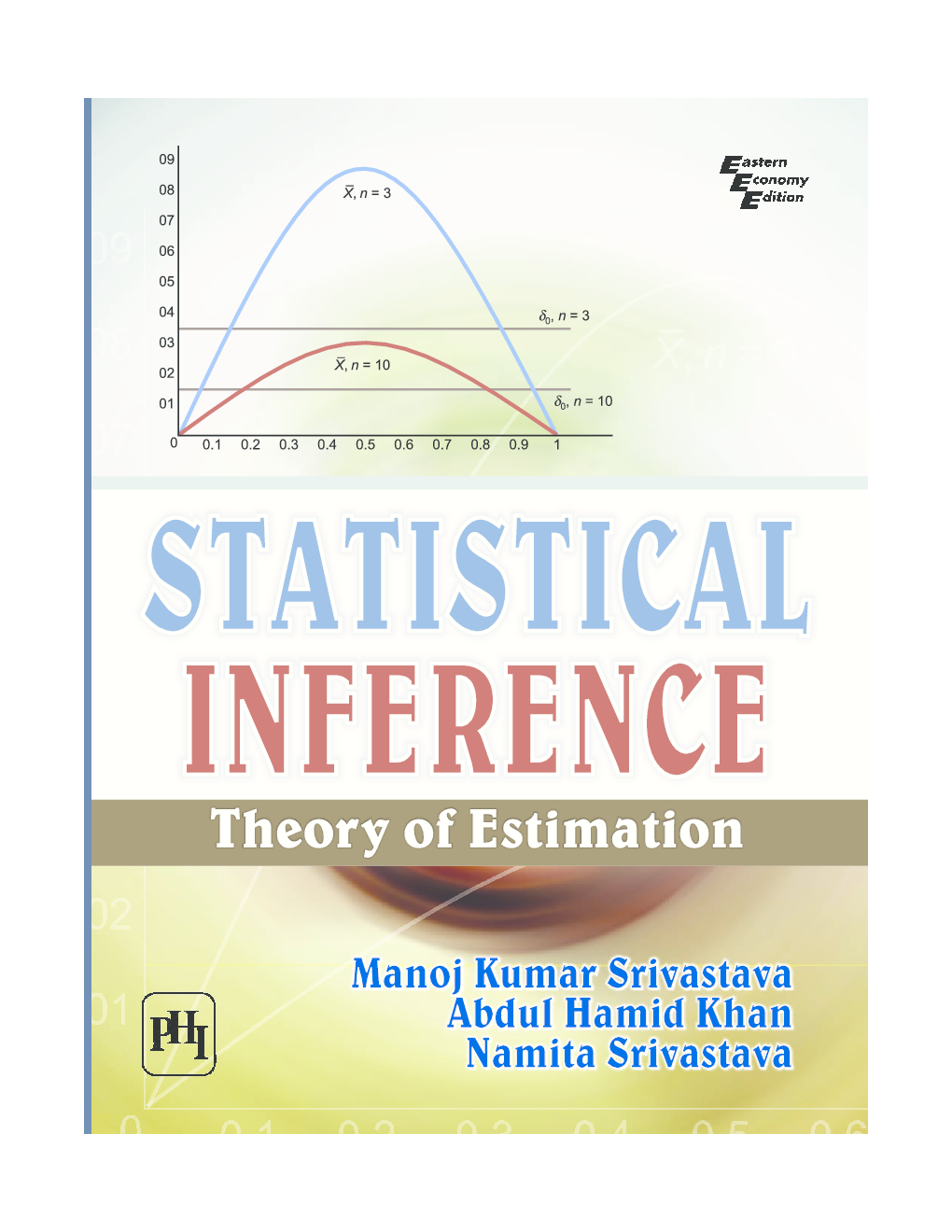

The book starts with discussing the growing levels of data summarization to reach maximal summarization and connects it with sufficient and minimal sufficient statistics. The book gives a complete account of theorems and results on uniformly minimum variance unbiased estimators (UMVUE)including famous Rao and Blackwell theorem to suggest an improved estimator based on a sufficient statistic and Lehmann-Scheffe theorem to give an UMVUE. It discusses Cramer-Rao and Bhattacharyya variance lower bounds for regular models, by introducing Fishers information and Chapman, Robbins and Kiefer variance lower bounds for Pitman models. Besides, the book introduces different methods of estimation including famous method of maximum likelihood and discusses large sample properties such as consistency, consistent asymptotic normality (CAN) and best asymptotic normality (BAN) of different estimators.

Separate chapters are devoted for finding Pitman estimator, among equivariant estimators, for location and scale models, by exploiting symmetry structure, present in the model, and Bayes, Empirical Bayes, Hierarchical Bayes estimators in different statistical models. Systematic exposition of the theory and results in different statistical situations and models, is one of the several attractions of the presentation. Each chapter is concluded with several solved examples, in a number of statistical models, augmented with exposition of theorems and results.

KEY FEATURES

Provides clarifications for a number of steps in the proof of theorems and related results.,

Includes numerous solved examples to improve analytical insight on the subject by illustrating the application of theorems and results.

Incorporates Chapter-end exercises to review students comprehension of the subject.

Discusses detailed theory on data summarization, unbiased estimation with large sample properties, Bayes and Minimax estimation, separately, in different chapters.